One is $latex \frac$, which is assumed available. Thus, we may first let’s assume we have the gradient of. From the forward perspective, we have, and then get. Let be the loss function and the purpose is to compute the gradient of, where the parameter is the parameter for the layer. What is Back-Propagation?Īs discussed, back-propagation is a method to compute the gradient. One loss layer may be the cross entropy. That is, where is the label index, is the corresponding output probability by the network with to-be-learned parameters.

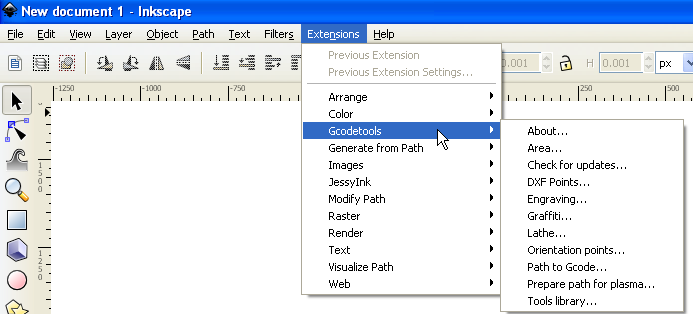

#Creating new layer in sheetcam how to

So how to change? A widely-used approach is to setup a loss layer, and the parameters are learned by the gradient descent algorithm to minimize the loss. To achieve the expected situation, we should change the paramters gradually. (Here we do not discuss about the overfitting)Īt the beginning, the layer parameters may be randomly initialized, and the output distribution may also be random, and does not satisfy our expectation. The network is trained so that the output probability on the label is 1 and all the other probabilities are 0. In the training phase, we have a lot of training data, described as multiple pairs of the image and the label. The input for the whole network is an image, and the output may be a probability distribution which indicates the possibility of the image being the corresponding label. How to Train a Deep Learning Network?Īssume the task is the single-label image classification. Here, we assume the form exists, no matter it is totally new or it is new to our existing implementation. In fact, if we can define a new layer and the new layer can really improve the accuracy or the speed, it may be a good academic paper.

We know the layer is just a function, and the forward propagation is to compute the output based on the input data. If we would like to add a new layer in the deep learning, we should first define the layer function. The different names of the layers refer to different forms of the function. The feature map may also go through pooling layer, LRN layer, e.t.c. The output may also serve as the input data of other layers.įor example, a raw color image, whose size is, can be first fed into a convolutional layer, and the output is generally called feature maps. The layer can be written as, where is the input data, is the parameter, and is the output data. Each layer is a function, and has input data and output data. In my understanding, deep learning is a neural network, composited of multiple layers. What is Caffe?Ĭaffe is an open source deep learning framework, and the main use case may be the image/video recognition. Next posts will continue on the implementation in the caffe codes. The change is interesting, so I’m thinking it may be good to take notes on this.įirst, let’s talk about some basic knowledge of deep learning.

#Creating new layer in sheetcam code

Recently, I found the caffe code changes a lot, especially in the terms of layer creating.